by Abdul Mathin Shaik

Oracle Data Integrator with Snowflake

In this article, we will talk about how to load the data into the Snowflake data warehouse using Oracle Data Integrator ODI. So let's get started.

Lately, one of our Snowflake customers was looking to use Oracle Data Integrator to load data into Snowflake from oracle DW. I have been working with Oracle applications such as ODI for years now, so I wanted to explore how to configure ODI to work with Snowflake.

What is Snowflake?

Snowflake is the first data warehouse built for the cloud for all your data & your users. Snowflake is changing the way you store, integrate, and analyze all of your data by all of your business users to get actionable insights.

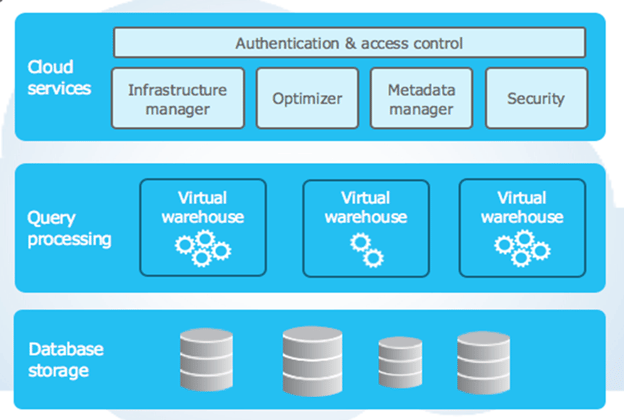

Its patented architecture separates the query processing layer from the disk storage. Queries execute in this layer using the data from the storage layer. Virtual warehouses are MPP compute clusters consisting of multiple nodes with CPU and memory are provisioned on the cloud by Snowflake.

Snowflake's architecture is a hybrid of traditional shared-disk database architecture and shared-nothing database architecture.

Snowflake Architecture:

Snowflake drives Business Intelligence, Analytics, Data Science, Visualisation, and smart decision-making, so you know exactly how your business is performing. By taking all the information from your transactional systems, regardless of data type, and performing the heavy data lifting within a cloud-built data warehouse, where performance, security, scalability, and concurrency are built in, supports your organization's Analytics needs.

Snowflake enables this capability with its patented, multi-cluster shared data architecture. In essence, multiple compute clusters share the same data while eliminating contention between workloads. With instant and near-infinite elasticity, users can automatically scale and never feel a slowdown or disruption with their queries when concurrency surges occur. Snowflake provides an unlimited amount of cloud resources at all times.

Here are some well-known benefits of migrating to Snowflake:

- Scale-up / Scale down on the go and multi-clustering for modern data sharing Built-in security, management, and performance

- Auto resume /auto suspend of the warehouse

- Pay-by-the-second solution that instantly and infinitely scales

- Snowflake's adaptive optimization no indexes, distribution keys or tuning parameters to manage

- Time Travel query data in the past

- Fail-Safe recovering historical data

- A single source of truth with full support for semi-structured data

- Data warehouse-as-a-service with first-class customer support

Connecting Snowflake with ODI:

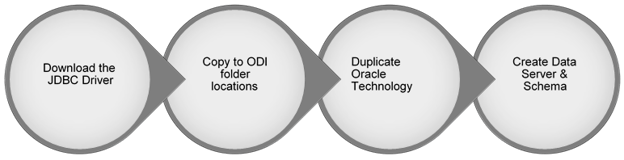

JDBC connectivity enables you to work with Snowflake, just as you would do with other databases in ODI.

Download the JDBC driver from below URL

Based on your ODI Installation home folder/config, place the driver (jar) file in below two locations.

- %/AppData/Roaming/odi/oracledi/userlib

- %Oracle_Home/Middleware/odi/agent/lib

Once placed, close and reopen ODI components for the changes to be reflected ( for the new driver libraries to take effect).

Create Technology for Snowflake:

One of the unique features of ODI is that you can customize and create nearly any object beyond what currently exists including the Technology object. The technology defines the type of data source that the connection will be created under. In the case of Snowflake, simply duplicate the Oracle technology and rename it to Snowflake technology. The sole reason I could do this with full assurance is because the SQL syntax used by Snowflake is almost like that of Oracle.

Note: Please ensure you test out all the possible production-like scenarios before deploying it to production.

Create Data Server & Schema:

Now, we must create a data server the object in ODI that stores the connection information for a given data source.

Right click on the technology created and select New Data Server, give it a name and enter the username/password on the definition tab.

Under the JDBC tab, I need to add the specific JDBC driver for Snowflake net.snowflake.client.jdbc.SnowflakeDriver

JDBC URL for the Snowflake account and database to which I' m connecting:

jdbc:snowflake://snowflakecomputing.com/?warehouse=LOCAL_WH&db=LOCAL_FILES&schema=HR

I've added the virtual warehouse, database, and schema names to the connection string. Now, to test the connection, simply click the Test Connection button on the top left side of the data server window.

The dialog that pops up allows us to choose which agent to test the connection against. When I chose the local (No Agent) basically using ODI studio the connection is successful.

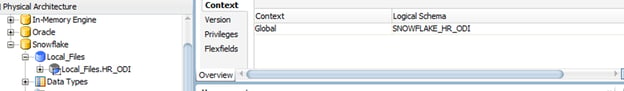

Once the data server is created, proceed with the creation of physical & logical schemas.

Data Loading:

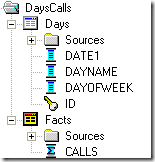

Metadata Import:

Once the connection is established successfully, the next step would be importing the table metadata.

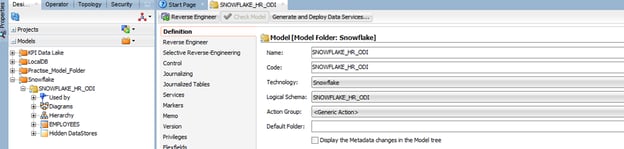

Navigate to Models, create a model folder, and model within it. While defining the model, we need to give the name of logical schema, after you have filled in other details click on Reverse Engineer. This step might take a couple of mins, depending on the number of tables in the schema.

Mapping Creation:

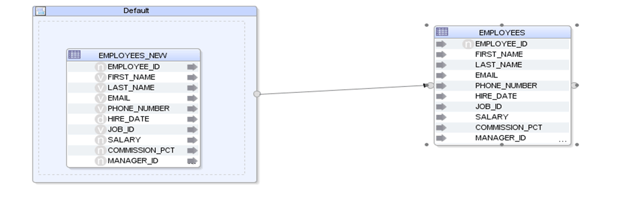

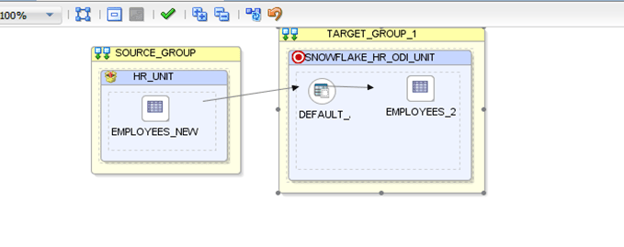

Drag the source and target tables on to the mapping workspace, do the column mappings as needed. Logical & physical flow of the mapping will look like as below.

Logical:

Physical:

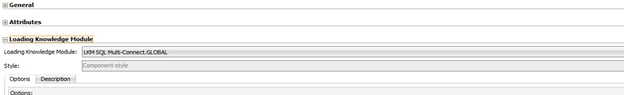

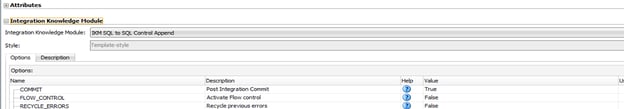

KM selection for loading Oracle to Snowflake is as shown below.

Mapping Execution:

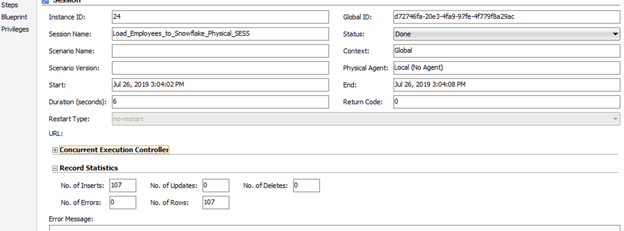

Now that the mapping is ready, execute the mapping by picking localagent. The below screenshot from the operator shows the status of execution.

Challenges:

Below are the few issues that might crop up during the configuration of ODI for Snowflake and/or while executing the mappings.

- Issues while using the Standalone agent.

- we will have to unset the SSL/TCP property in instance.cmd file.

- path: %domain_home\config\fmwconfig\components\ODI\OracleDIAgent1\bin

- Number data types comparison.

The number column in Oracle accepts decimal values as well, whereas in Snowflake, we must explicitly define the scale and precision.

|

NUMBER |

BYTEINT,SMALLINT,INTEGER,BIGINT,FLOAT,NUMERIC,DECIMAL |

|

FLOAT |

FLOAT,DOUBLE,REAL |

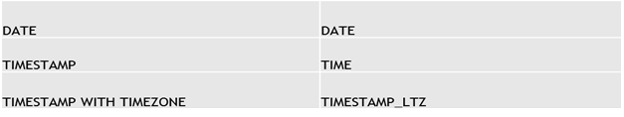

- "Date" data types comparison

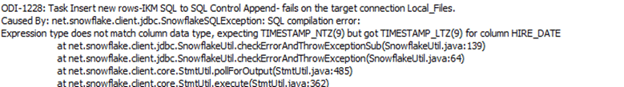

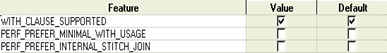

By default, TIMESTAMP data type in Snowflake is TIMESTAMP_NTZ, due to which you might get the below error while loading the data from TIMESTAMP column.

Well, that's it, folks. Please provide your experience and issues in the section below and based on the criticality of the issue. Please watch this section for more exciting posts on Snowflake.

As a Snowflake partner, KPI Partners can help you with Snowflake migration assessment, among others, to achieve your performance and cost-benefit objectives. Let us contact you.

Abdul Mathin Shaik is a Principal Consultant at KPI Partners and has around 10 years of experience in data warehousing and business intelligence field. He has extensively worked on Oracle BI Apps projects using both Informatica & ODI . Also , on the reporting front he has worked on OBIEE & Qlikview . He has also worked on Real-Time reporting solutions for various modules of Oracle EBS .Currently exploring the area of data integration to Snowflake using ODI & Python.

Comments

Comments not added yet!