Modernize Oracle Workloads with Automated Migration to Databricks.

It transforms complex Oracle PL/SQL stored procedures into optimized PySpark notebooks while migrating schema and historical data together, enabling faster validation and dependable adoption of the Databricks Lakehouse.

Reduce Timeline, Reduce Risk, and Reduce Cost with AI-Driven Conversion and OpenFlow-Orchestrated Execution

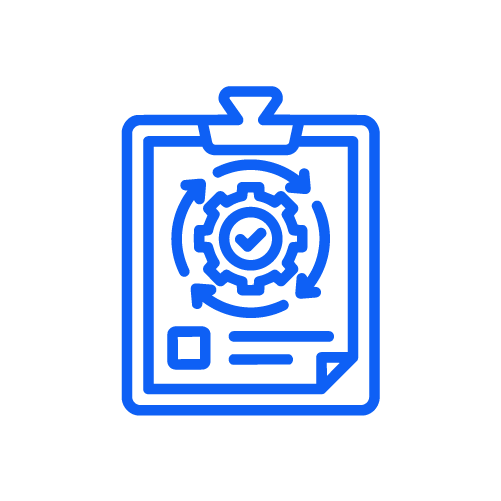

Code Conversion Process Map

Oracle-to-Databricks Migration Accelerator

Key Business Benefits of KPI Partners Oracle-to-Databricks Migration Accelerator

Reduced Manual Effort

Automates the parsing and conversion of complex Oracle PL/SQL stored procedures into PySpark notebooks.

Read hereFaster Time-to-Validation

Accelerates migration timelines by converting logic and migrating schema and historical data together.

Read hereHigh Logic Fidelity

Preserves transformation behavior through deep Oracle grammar parsing and an intermediary representation layer, minimizing rework.

Read hereMaintainable, Cloud-Native Output

Produces clean, consistent, and Pythonic PySpark code aligned with Databricks best practices.

Read hereScalable Across Large Estates

Handles high volumes of Oracle stored procedures with predictable delivery and controlled execution effort.

Read hereWhat We Migrate

-

Oracle schemas and tables

-

Views and derived objects

-

PL/SQL stored procedures and functions

-

Complex procedural logic (cursors, loops, conditional flows)

-

Historical data for validation and testing

Where This Accelerator Fits Best

-

Enterprises modernizing large, legacy Oracle estates

-

Programs moving from procedural PL/SQL to scalable Spark-based processing

-

Organizations adopting Databricks for advanced analytics, AI, or GenAI workloads

-

Teams seeking to reduce Oracle dependency while preserving business logic

-

Phased modernization initiatives requiring predictable outcomes and low risk

Get in touch with our experts

Book one of our KPI experts for a personalized walkthrough

Looking for a new career?

By submitting, you consent to KPI Partners processing your information in accordance with our Privacy Policy. We take your privacy seriously; opt out of email updates at any time.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.